HashiCorp Field CTO Weekly: Cyberbunnies and Sad Farewells

Volume 104

This week’s newsletter comes to you a little behind schedule. It is dedicated to our beautiful fur baby Frida, who we said goodbye to last week. She and her sister Foxy were a part of our family’s lives for more than 15 years, and as much as I was keen to try and share some of what I’ve been observing in the industry, I decided to take some time to grieve with the family and remember the fun times we all had together.

Vale Frida.

I will segue into our technical content through another animal story, entirely by coincidence. My kids have been asking me to buy them a rabbit for a little while now. I’ve been holding out because kids’ pets inevitably become the parent’s responsibility. Since we live a fair way out of the city, we do have a few wild rabbits around the place, so I decided on a cunning plan. I tasked them with catching a rabbit - confident in the fact that there was no way two kids could successfully manage to do it. They set up this sophisticated trap to put into the garden, and I promptly directed my attention to other matters. More on that in a moment.

Encryption is as encryption does.

While I called the trap “sophisticated”, what I really meant was rudimentary, which appears to be how Optus CEO Kelly Bayer Rosmarin also used the word during a media briefing about the Australian data breach that allegedly resulted in many millions of customers having their personal information stolen via an unauthenticated public facing API. This has been a hot story in Australia for the last few weeks, with good reason. Optus is one of the three major telcos, with a not insignificant market share.

According to Ms Bayer Rosmarin, the attack penetrated multiple security layers.

What I can say, which will help people understand that it’s not as it’s being portrayed, is that our data was encrypted.

But if the data was encrypted, how could the attackers access it as clear text? This is a conversation I regularly have with customers - encryption can be implemented in multiple places and defends against different vectors. One traditional pattern for encryption is to attach your storage array to a Hardware Security Module (HSM), which protects against physical threats, such as theft of your array or the disks inside it. But what if someone compromises your backups and restores your virtual machine?

So, we move encryption on step higher in the stack and encrypt the virtual machine that your database runs in, preventing it from being backed up and restored to another environment. Progress! But what happens when someone restores a database backup?

It turns out that databases can be encrypted on disk as well, which prevents their files from being copied off the disk and moved to another machine. But what happens when there is an attacker on the network?

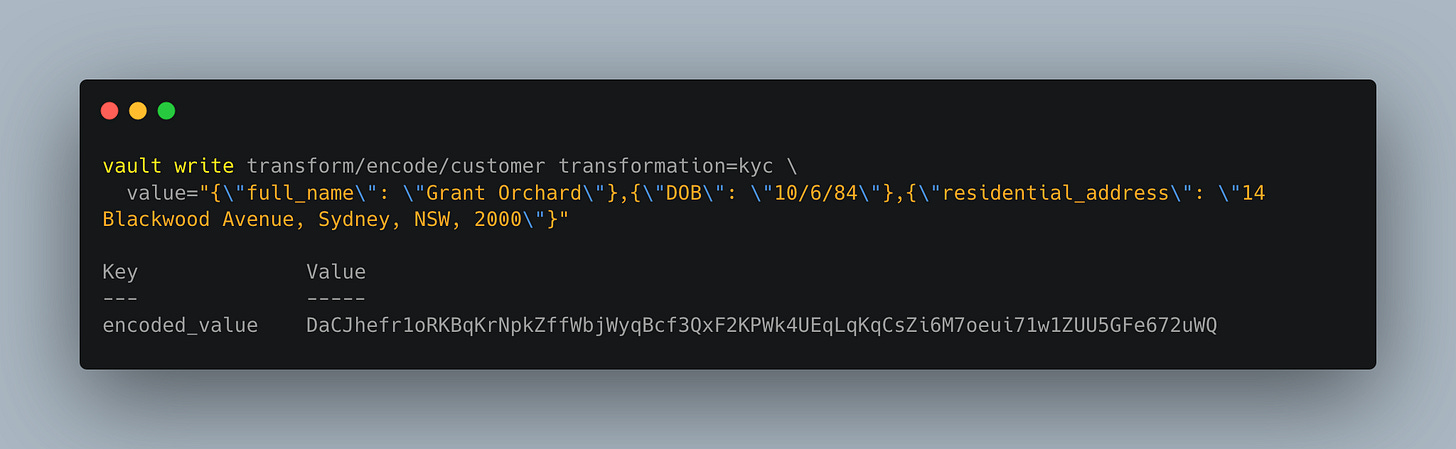

More recent approaches that provide field-level encryption of your data help with the “on-network” threat, as can leveraging tokenisation. But…. what happens when the compromise occurs on an upstream API with permissions to decrypt the cipher-text, or retrieve the data associated with the token?

Tokenisation exchanges a sensitive value for an unrelated value called a token.

I won’t keep playing the “but what happens” scenario escalation game, as I think I’ve made my point. Protecting sensitive information is a challenge that crosses infrastructure, data, application, and cybersecurity teams. It’s also why compliance checklists with “encrypted-at-rest” or “encrypted-in-transit” are terrible. There is no context, no appreciation for what it is that you’re attempting to protect against. If you set a minimum bar, then people will do the minimum required to pass it.

As Optus pointed out in their response to the Attorney-General’s Department’s long-running review of the Privacy Act, “there are significant technical hurdles” to implement tighter security controls for Personally Identifiable Information (PII). While I can’t speak to the costs involved, I wonder how they compare to the reputational damage, loss of customer trust, and impact on future revenue that are the likely outcomes of this incident?

As much as I love technology, it strikes me that the best way to protect sensitive information is not encryption or tokenisation, but rather not collecting it in the first place.

Solving the right problem?

Decisions about what kind of personal information is collected or organisations offering a service in Australia falls under the auspice of AUSTRAC. It requires that organisation verify that customers are real people - aka “Know Your Customer”. At the same time, our federal Privacy Act says only that information must be destroyed “where the entity no longer needs the information for any purpose for which the information may be used or disclosed by the entity”.

In short, our laws and regulations don’t compel organisations to behave in a way that best protects us as citizens. I could talk about this all day, but since the vast majority of our readers are not Australian, let me bring this back to a more central theme that (I hope!) resonates.

As technologists, it is far too easy for us to go looking for new tools, packages, and frameworks to solve the problems we see in front of us. I have spoken with many, many customers who saw the public cloud as the answer to all the problems they had with moving quickly, and spun up programs of work to start dropping net new workloads into the cloud. Imagine their surprise when they discovered that they weren’t moving any faster - that the bottleneck was actually a result of decades old mindsets and team topologies built for reducing the risk of change through highly introspective processes.

One thing we found surprising was that the biggest predictor of an organization's software security practices was cultural, not technical: high-trust, low-blame cultures — as defined by Westrum — focused on performance were significantly more likely to adopt emerging security practices than low trust, high-blame cultures focused on power or rules.

In our last newsletter, Ray shared some thoughts on the role of the Platform Team, which is one response to organisational optimisation that is starting to trend. I really enjoyed Forrest Brazael’s take on this, and I’ll admit to some concern about the likelihood of platform team “washing” over the next few years. One way that I’d like to see us begin combating this is as an industry is by not just staffing these teams with highly skilled engineers, but also by pulling in people from product management and design backgrounds - the kinds of people who know how to engage the folks consuming the platform, know how to elicit information from people about how to become better, and treat the platform as something built to serve the needs of others, rather than solving for their own needs.

You don’t need a sophisticated trap to catch a stupid rabbit.

This week, we explored a few ideas but there are a couple of key takeaways that I’d like to reinforce.

Trust is a precious commodity, and protecting it is worth near any cost.

Understand what you are protecting, and what you’re protecting it from.

Solve for the needs of your customers, not for your own needs.

Kids will always surprise you.

I guess the fourth point requires me to finish my story about the rabbit-catching challenge. A picture paints a thousand words, and while I’m sure there is some parallel to be drawn about sophisticated traps and stupid rabbits, I have to go buy a hutch and some carrots.

Thanks for reading!

- Grant